Slingshots part deux: Meet Laude’s latest open research bets

An early look at projects tackling agent evaluation, continual learning, and compute efficiency.

Welcome to Forkable’s Open Profile column, where I go in-depth on key projects, startups, and figures from across the open source realm.

Last year, I worked with the folks at Laude Institute to write a feature piece around the launch of Terminal-Bench, an open source benchmark designed to measure how well AI agents complete real-world tasks in live terminal environments. The project quickly became the industry standard for evaluating agent performance, cited by major frontier model providers and adopted across the research community.

Laude Institute, co-founded by Databricks co-founder Andy Konwinski, backs upstream AI research with an emphasis on work that is built and released openly. Terminal-Bench was, in fact, part of a broader program run by the institute called Slingshots — an initiative that provides early-stage researchers with funding, compute, and operational support to ship ambitious work into the open. The first cohort included projects spanning benchmarks, frameworks, and agent tooling, several of which have since evolved into widely used infrastructure or spun out into companies.

While the nature of the projects vary, two key facets will generally hold true: AI will be a central ingredient, and qualifying projects will follow an entirely “open” ethos.

“Laude Institute is ‘open everything’ — open model, open weights, open source, open discourse, open publications,” Konwinski explained to me in an interview last year.

Now, Laude is announcing its second Slingshots cohort, a new batch of very early-stage projects tackling agent evaluation, continual learning, energy measurement, model architectures, and reinforcement learning systems.

Read the story in full below.

Building durable AI research in the open

AI research moves quickly, but most early-stage ideas stall before they’re widely adopted. Laude Institute’s Slingshots program is designed to intervene at that embryonic stage, backing “upstream projects” with funding, compute, and operational support to help teams ship their work.

The projects themselves can take many forms. Internally, Laude tracks them across categories spanning datasets, benchmarks, architectures, algorithms, models, agents, frameworks, and applications — a reflection of how wide the AI research stack has become.

Laude and its Slingshots program function re like a research accelerator (rather than a startup incubator), with teams selected based on potential impact to the field, rather than any specific commercial milestone. Most projects originate in leading academic labs, often from PhD researchers and faculty working at the edge of the field. And notably, Laude deploys resources quickly — in some cases within hours — allowing teams to accelerate promising lines of research.

“Success for these projects looks like adoption and users, rather than papers or citations,” Braden Hancock, research partner at Laude Institute and a Stanford PhD, told Forkable over email. “We’re looking for research that will be used.”

That philosophy is visible in how the first Slingshots cohort evolved. Terminal-Bench — developed through collaboration between researchers at Stanford and Laude — became a reference benchmark for evaluating AI agents operating in the command line. DSPy, meanwhile, grew into a widely adopted framework for programming language models. More recently, Harbor — part of Slingshots’ second batch — emerged as a broader agent evaluation framework building on ideas first explored in Terminal-Bench, with overlapping contributors.

“One thing we’ve been happy to see is a compounding effect, with projects in subsequent batches building on open infrastructure and insights from previous ones,” Hancock explained.

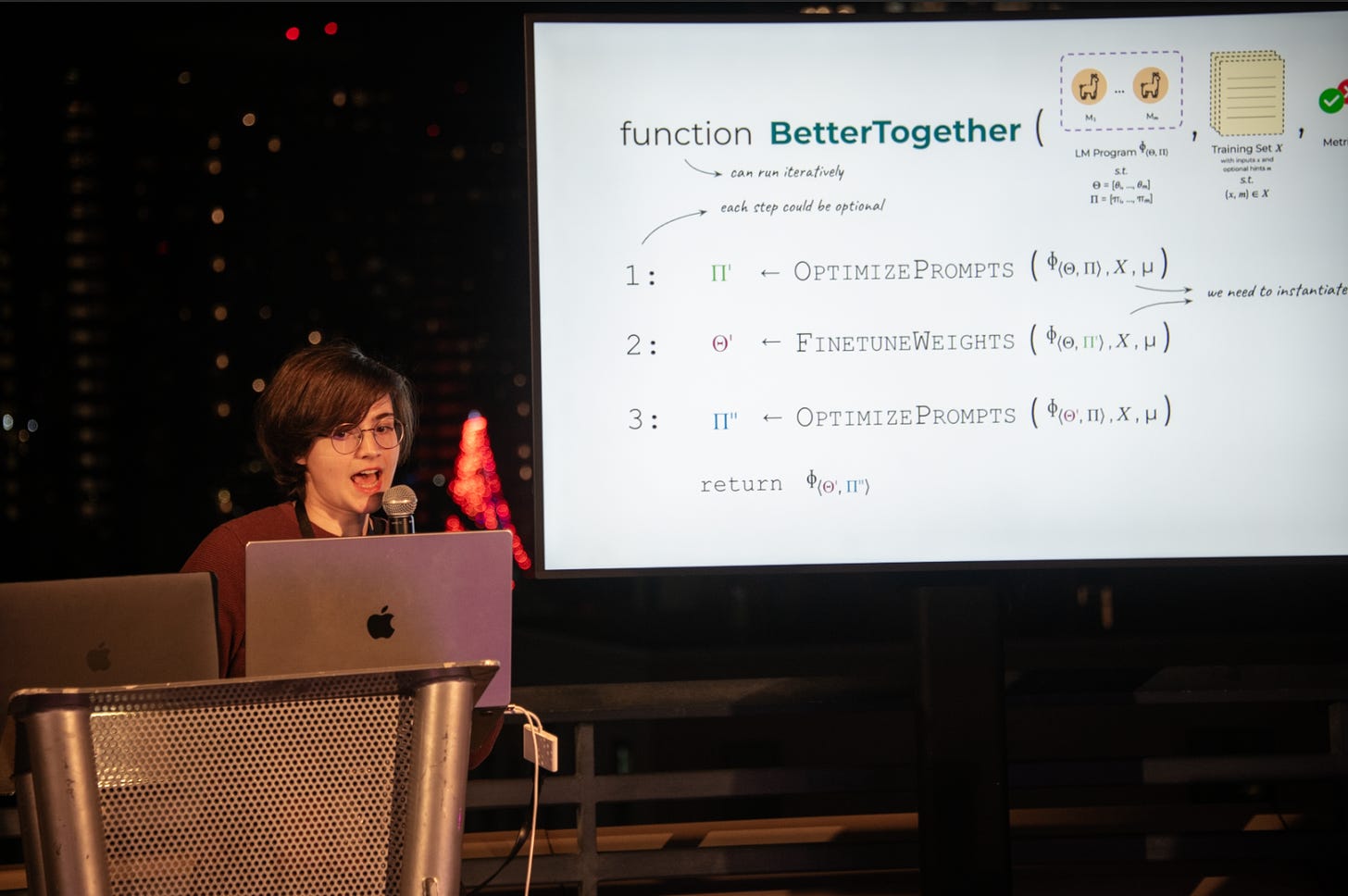

He said he expects that pattern to continue, with later cohorts building directly on infrastructure created in earlier ones. Harbor, as noted, extends work first explored in Terminal-Bench, while a forthcoming batch 3 will likely include a new benchmark designed to run on Harbor. From the first cohort, DSPy, is also now followed by Arbor, a reinforcement learning layer for DSPy, and BetterTogether, which combines DSPy prompt optimization with weight updates. Meanwhile, one of the current top-performing approaches on ARC-AGI-3 — another batch one project — comes from a company using RLMs (Recursive Language Models), included in batch two.

“This kind of compound interest from building in the open is something we hope and expect to see continue,” Hancock said.

A fresh batch

In its second batch, Hancock points to several themes that have surfaced independently across multiple institutions. Continual learning — enabling models to retain knowledge across sessions — appears in projects such as the not-yet-released Continual Learning Benchmarks from UC Berkeley and GUM (General User Model) from Stanford. QED-Nano, from Carnegie Mellon University, also explores how smaller models can become persistent solvers through sustained test-time compute and adaptation.

Energy efficiency is another emerging focus. Intelligence-per-watt, from Stanford University, and MLEnergy, from the University of Michigan, are both building “measurement and routing infrastructure” designed to quantify and reduce the energy footprint of machine learning workloads.

Given the early-stage nature of the program, not every project has a public URL or finalized name yet. Additional details will be published as teams release their work.

Slingshots // Two

Harbor: An agent evaluation framework for defining and running environment-based tasks at scale, now used to ship benchmarks including Terminal-Bench 2.0. (Laude Institute)

OpenThoughts-Agent: An end-to-end open setup for training and evaluating terminal agents using curated data, real environments, and reinforcement learning loops. (UC Berkeley)

SREGym: A unified platform for designing and evaluating AI agents for site reliability engineering, with safety guardrails for infrastructure work. (University of Illinois Urbana-Champaign)

Intelligence-per-watt: A benchmark suite for edge-cloud inference routing that measures when smaller local models can match frontier performance while reducing energy and cost. (Stanford University)

MLEnergy: Tooling and systems infrastructure to measure energy consumption across machine learning workloads, making energy a first-class metric. (University of Michigan)

ProjectASAP: Infrastructure that replaces “scan everything” data analysis with lightweight summaries, enabling faster and cheaper queries across massive datasets. (Carnegie Mellon University)

GUM (General User Model): A system that builds a persistent model of user behavior to enable proactive, context-aware AI assistance. (Stanford University)

Continual Learning Benchmarks: Benchmarks and methods designed to evaluate models that retain knowledge over time rather than resetting each session. (UC Berkeley)

QED-Nano: A 4B model trained with supervised fine-tuning and reinforcement learning that matches larger frontier models on Olympiad-level math proofs at significantly lower cost. (Carnegie Mellon University)

RLMs (Recursive Language Models): A framework that allows language models to write and execute programs to handle complex tasks with structured intermediate reasoning. (MIT)

BetterTogether: Optimization tooling that jointly tunes prompts and model weights to improve multi-step language model programs. (Stanford University)

Arbor: A reinforcement learning optimization layer for DSPy that automates agent workflow improvement at scale. (MIT)

Open Inference: Infrastructure that lowers barriers to trying models while creating a living dataset of real-world interactions to measure progress and failure modes. (UC Berkeley)

Unnamed (Melissa Pan): A forthcoming project building on prior large-scale research into production agent deployments, backed early to accelerate development. (UC Berkeley)